Universities are living through unprecedented and turbulent times. The current pandemic is forcing a reset of their entire operating model, with hybrid work – i.e a mix of online and onsite activities – becoming the norm. Needless to say, these changes are also impacting research activities as most labs have been forced to shut down or drastically reduce their experimental work.

As a consequence, a lot of effort has been put in revisiting the interactions between universities and their communities of students, researchers and academic staff, often leading to innovative digital ways to deliver education and research services while respecting social distanciation constraints. We believe this “reset mood” provides a window of opportunity to redefine the management of research core facilities, an essential but often overlooked element of the performance of academic research.

In this post, I’d like to lay out the arguments in support of the modernisation and consolidation of the management of core facilities in universities and research institutions.

The essential role of core facilities for academic research

Research core facilities have taken an essential part in modern science. Over the last 20 years, the cost and complexity of the technology involved in today’s experiments has forced the specialization of scientists and mutualization of instrumentation. No single lab can afford the amount of investment in skills and equipment that is required to stay at the forefront of technology.

“Excellence in research is intimately linked to excellence in capability, and that is what the core research facilities are all about. They enable high-impact research through state-of-the-art infrastructure.”

The University of Sydney

This evolution has created new roles and organisational models where core facilities are actually providing services to a community of scientists, often for a fee. However, turning scientists and engineers into service providers is no easy feat, as this recent article in Nature illustrates. As Nature puts it, people running a core facility must develop a rare mix of complementary skills: “in-depth knowledge of the hardware they oversee, managerial and financial acumen to run what is effectively a business, and scientific know-how to guide researchers through a range of experimental systems and designs”.

In the summer of 2019, we conducted a survey with The Science & Medicine Group, a business intelligence firm specializing in science, to better understand how dependent research projects had been on core facilities and shared instrumentation.

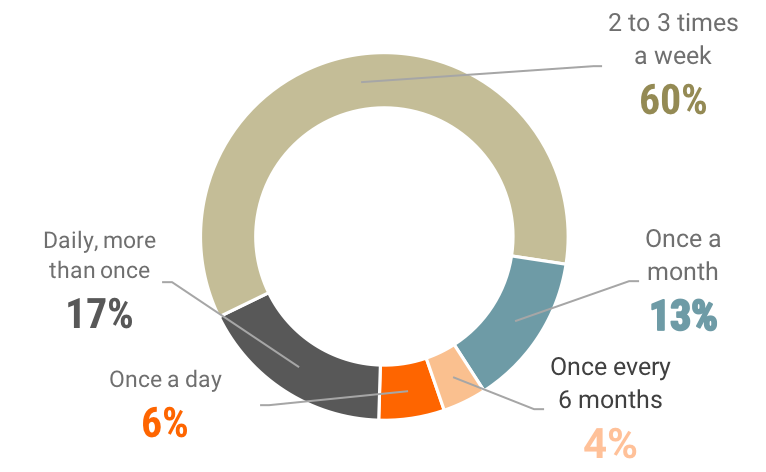

The following chart shows how frequently researchers use shared instrumentation from core facilities (Source: Science & Medicine Group Survey for Keralia, September 2019).

If you’re interested in reading more, you may want to download the full survey here.

In the face of increased competition, core facilities enable research institutions to respond to their development challenges by fostering:

- The creation of a sustainable competitive advantage by staying at the forefront of technological development in their field.

- The acceleration of the innovation lifecycle, from the feasibility of an idea to experimentation.

- Collaborative work between research teams and sharing of methodologies and knowledge.

- The training of young scientists and their familiarization with new technologies.

Main challenges facing core facility managers

About a year ago, based on conversations with a number of core facility managers, we published an article that highlighted the main challenges confronting them. I believe that what they told us about their top-of-mind issues still holds true today, and even more so after a year of pandemic:

- Deliver quality services under tight timelines.

- Manage high volumes of internal and/or external users (all having different service and pricing options).

- Optimize back office work (usage tracking and billing often cited as monthly nightmares).

- Secure appropriate funding to maintain cutting-edge technology and expertise in their chosen field.

- Contribute to improve the management of scientific projects (data management and reproducibility of experiments being common pain points).

- Attract, train and retain talent (given the diversity of projects handled by a typical core facility, getting a postdoc up to speed can take over a year).

- Increase awareness and visibility of their services (at the end of the day, core facilities can bring substantial new sources of funding in a situation where university budgets are depressed).

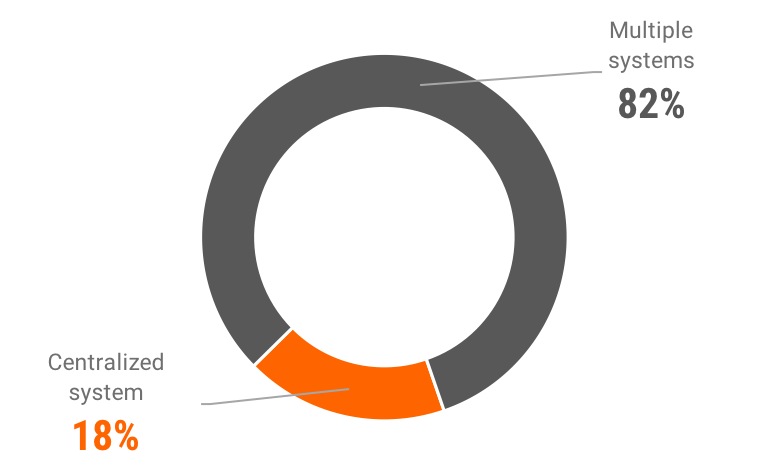

However, it turns out that in most academic institutions, core facilities are still relying on a collection of disparate processes and manual tools (spreadsheets, emails, various landing pages, online forms, etc.) to run their business. Take booking and scheduling for instance, a mandatory first step for any core facility’s user: Our research shows that for over 80% of users, diversity of systems is the norm (see following chart from our above-mentioned survey).

This diversity of management systems is a result of a history of independently run core facilities, trying to go about their business as they could, with limited financial capabilities for anything but the acquisition and maintenance of instrumentation.

We believe this situation bears several consequences that are harming the overall performance of academic research:

- First, there is a significant productivity issue for researchers who are faced with diverse and cumbersome systems to access the resources provided by core facilities: Our survey shows that scientists can waste 5 hours per week on average on experiment design and scheduling (which comes down to 330,000 hours lost for research each year for a community of 1,500 scientists).

- If you look at the core facility managers’ side, the same survey shows that they can waste on average 2 days per month on instrument usage tracking and billing (which comes down to one month lost for research each year).

- This legacy of diverse and mostly incompatible systems makes it extremely difficult, if not impossible, to get a consolidated view on instrumentation capacity at university level, and thus to provide insights and support sound decision-making on technology investments.

- Project and data management are highly impacted when the time comes for research evaluation and publication, as experiment and data traceability require a good amount of manual reconciliation work, often time-consuming and error-prone.

- For lack of easy integration, core facilities might be left behind the digital initiatives that have sprung up on many campuses to deal with the pandemic situation and the “new normal” in terms of interactions with student and research communities.

- Lastly, poor user experiences and lack of visibility on their services put core facilities on a wrong foot when it comes to developing business with external customers as a new source of funding.

Building a case for change

At Keralia, we believe that a modern cloud platform can help address most of the issues we’ve been discussing above, provided that this platform comes with 3 essential components:

- Consumer-grade user experience based on a service portal where scientists can access in a couple of clicks to any resource they need to do their daily work (instrumentation, training and technical advice, consumables, computing services, protocols and procedures, knowledge and best practices, etc.).

- Easily customisable, no-code workflow engine to automate as much as possible non-adding value tasks and enable connected experimental workflows.

- One single system of record providing a holistic view on the state and utilization of research technology resources, for individual core facilities and campus-wide.

Transitioning from disparate legacy systems to one single cloud platform bringing all core facilities under one common management system may seem like a daunting endeavour, but the journey comes with a number of benefits. The business value of such projects has been confirmed in other areas, like for example the consolidation of IT management systems, historically implemented in silos, that has drastically improved the quality of IT services whilst reducing IT costs.

Let’s take a closer look at the most obvious benefits in the context of academic research:

- The cost of maintaining a set of heterogeneous software applications is huge. Numerous studies have been produced on the business value of so-called rationalisation projects, which have been one of the main drivers of IT cost reduction in the last two decades. According to Forrester’s Total Economic Impact studies, for instance, reduced and /or avoided IT costs from such projects can reach $2M per year in an organization serving about 15,000 users, the size of a typical university. Those cost reductions derive from reduced effort on IT support, avoided development costs (on integrations, data consolidation and reporting, and new features) and avoided annual costs for legacy applications which are replaced by the common platform.

- A common platform brings all research instrumentation under one single asset management system. In such a setup, each core facility retains full control over their equipment, while consolidated views are available in a couple of clicks for each organisational layer, up to the entire university. This has a significant impact on the overall cost of asset management and creates efficiencies like improved capacity management (instant view of under-utilized equipment for instance), ability to get better conditions on instrument maintenance and/or purchasing contracts, avoided external rental costs when equivalent equipment might be available internally, etc.

- Having all core facilities on the same platform enable technical specialists to share best practices and methods. Platform components, like custom workflows or instrument connectors, can easily be duplicated and reused, avoiding time-consuming rework and speeding up the learning curve.

- On the users’ side, we’ve mentioned above the impact on the productivity of scientists from having to juggle with heterogeneous and/or poor scheduling systems. We estimate that a modern service portal can induce time savings in the range of 10-15%. Just imagine what else you could achieve with 10% more researchers!

- Cutting through manual data wrangling is another bonanza. Researchers may spend 15 hours per week on data manipulation (acquiring datasets from instruments, checking and moving data between applications, etc.). We estimate that workflow automation on a common platform may save at least 50% of this time, that is one more day gained each week!

- And there’s more to it than time savings: A common platform can foster collaborative work, plus data and knowledge sharing in a much easier way than a bunch of separate applications and data silos.

- Lastly, investing in a consumer-grade service portal might help improve the top line by making core facilities more visible to potential external customers and easier to do business with.

Key takeaways

- In the last 15 years, core facilities have taken an essential role in the performance and competitiveness of modern research.

- However, in many universities, the software tools on which core facility managers run their business are falling far short of the mark.

- A modern cloud platform can help, provided it comes with a consumer-grade service portal, an easily customisable workflow engine and one single system of record.

- Although such consolidation projects might seem daunting, their business value can be demonstrated in order to provide a solid case for change.

Want to learn more about our lab management collaborative cloud platform, visit the newLab page here.